Using Citizen Science Data as Pre-Training for Semantic Segmentation of High-Resolution UAV Images for Natural Forests Post-Disturbance Assessment

Table of Contents

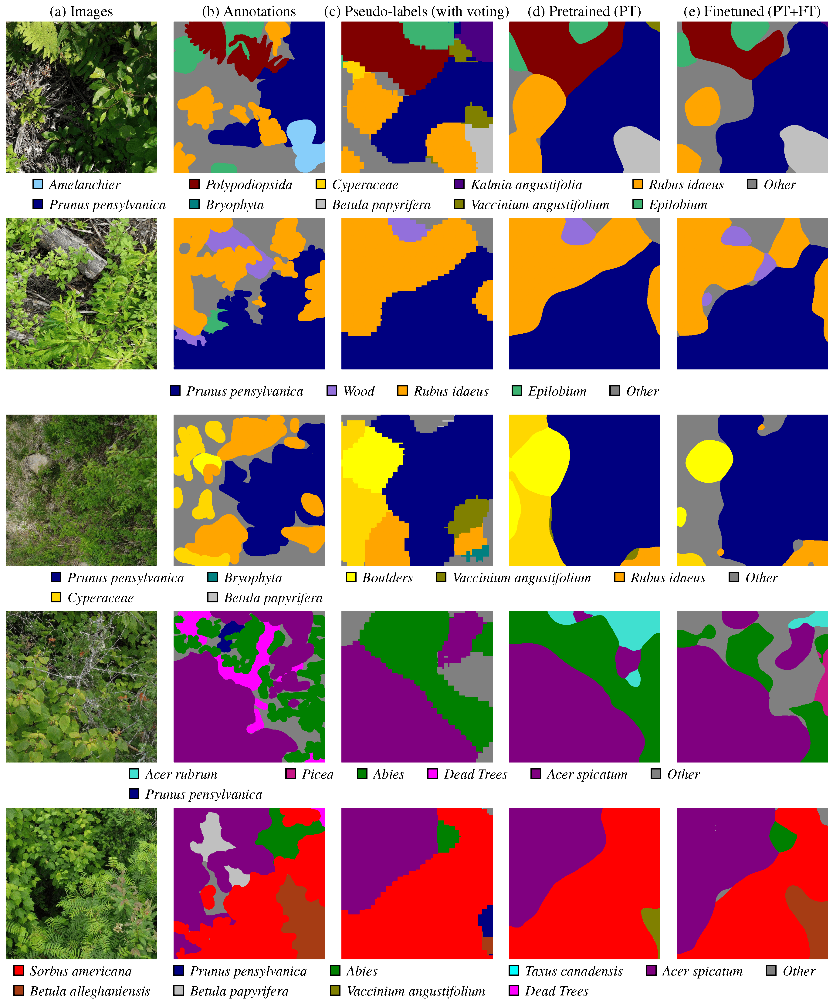

During the last months, I contributed to the paper Using Citizen Science Data as Pre-Training for Semantic Segmentation of High-Resolution UAV Images for Natural Forests Post-Disturbance Assessment, published in the Classification of Forest Tree Species Using Remote Sensing Technologies: Latest Advances and Improvements special issue of the Forests MDPI journal. This paper proposes a novel pre-training approach for semantic segmentation of UAV imagery, where a classifier trained on citizen science data generates over 140,000 auto-labeled images, improving model performance and achieving a higher F1 score (43.74%) than training solely on manually labeled data (41.58%). With this paper, we highlight the importance of AI for large-scale environmental monitoring of dense and vasts forested areas, such as in the province of Quebec.

Here is the abstract:

The ability to monitor forest areas after disturbances is key to ensure their regrowth. Problematic situations that are detected can then be addressed with targeted regeneration efforts. However, achieving this with automated photo interpretation is problematic, as training such systems requires large amounts of labeled data. To this effect, we leverage citizen science data (iNaturalist) to alleviate this issue. More precisely, we seek to generate pre-training data from a classifier trained on selected exemplars. This is accomplished by using a moving-window approach on carefully gathered low-altitude images with an Unmanned Aerial Vehicle (UAV), WilDReF-Q (Wild Drone Regrowth Forest—Quebec) dataset, to generate high-quality pseudo-labels. To generate accurate pseudo-labels, the predictions of our classifier for each window are integrated using a majority voting approach. Our results indicate that pre-training a semantic segmentation network on over 140,000 auto-labeled images yields an 𝐹1 score of 43.74% over 24 different classes, on a separate ground truth dataset. In comparison, using only labeled images yields a score of 32.45%, while fine-tuning the pre-trained network only yields marginal improvements (46.76%). Importantly, we demonstrate that our approach is able to benefit from more unlabeled images, opening the door for learning at scale. We also optimized the hyperparameters for pseudo-labeling, including the number of predictions assigned to each pixel in the majority voting process. Overall, this demonstrates that an auto-labeling approach can greatly reduce the development cost of plant identification in regeneration regions, based on UAV imagery.

Links

For more info,